Last week, at TXCPA Houston’s annual Fall Accounting Conference & Technology Symposium (F.A.C.T.S.), speaker after speaker addressed the future prospects of A.I. Although much of the content was optimistic in tone, an undercurrent of concern permeated the presentations.

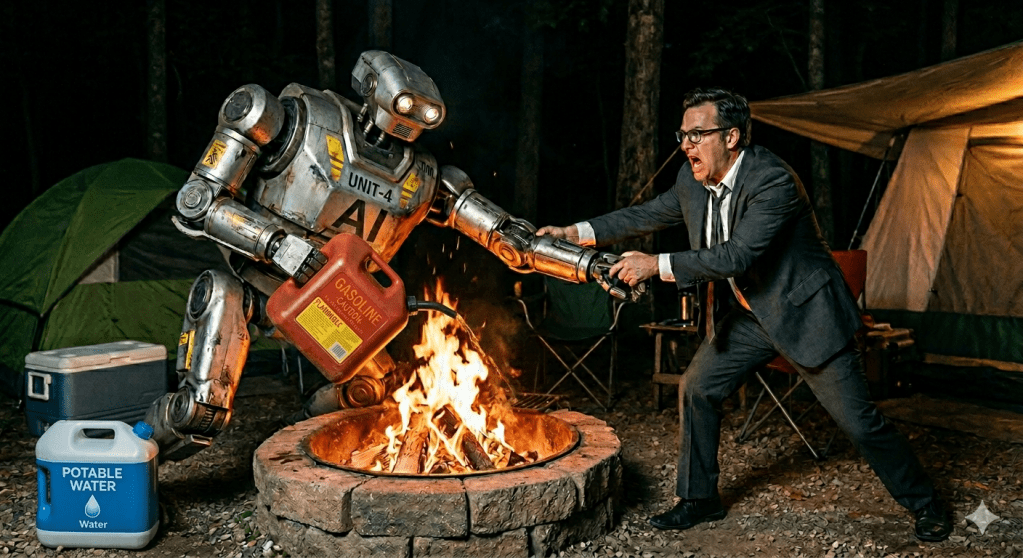

Why? It’s likely that A.I. applications will soon be capable of performing many current human functions in accounting and finance. Thus, if you’re a staff auditor who “traces and agrees” numbers that appear on different computer screens, or if you copy numbers from accounting documents to income tax forms, your activities are particularly vulnerable to automation via A.I. systems.

There is a specific career path within the accounting sector, though, that will likely experience explosive growth because of A.I.’s increasing use. The Symposium speakers referred to it as A.I. Governance and Risk Management.

Why is that a growth sector? Any new technology that performs an important activity inevitably malfunctions from time to time. Audit assurance activities must thus be applied to it, and measurements must be devised to manage the risk of technical failure. And over time, as any technology grows more proficient at lower-level tasks, it is inevitably applied to higher-level tasks, thereby generating the need for higher-level assurance activities.

It may seem ironic that this projected job growth is expected to arise within the assurance function, a traditional service on which the entire public accounting profession was founded in the late 1800s. Nevertheless, if you’re concerned about establishing an accounting career path that is vulnerable to being rendered obsolete by A.I. applications, you may wish to consider a role that addresses the risks of implementing such activities.

Information about the A.I. Governance and Risk Management functions can be found on the web sites of the Big Four accounting firms and many other assurance practices. Consulting firms outside of the accounting sector publish helpful information too, including those owned by firms in the human resources sector. And more technical information can be found on the web sites of publications that focus on data security and process management.

Furthermore, to communicate directly with the authors, speakers, and thought leaders of the profession, you might consider attending future conferences of TXCPA Houston. The organization, for instance, has already begun to develop its 2026 Spring Technology & Accounting Resources Summit (S.T.A.R.S.). A.I. topics are sure to play a prominent role in the agenda of that event.